A blog on translation, AI, and terminology

As I mentioned in another article, I check and curate TMX files on consistency and flawlessness as a service.

The MemoQ and DejavuX3 CAT systems allow the direct import of TMX files as source files into a project (not into a new translation memory database, but into the translation project itself).

Trial versions of MemoQ CAT system are available from Kilgray here https://www.memoq.com/downloads.

Trial versions of DejavuX3 Professional CAT system are available from Atril under www.atril.com.

After import as a source file, you can process your TMX as any other file in a translation project.

The benefit of this approach is that you can see (and edit) everything that is inside: you see not only a spot of the TM as in the concordance search but the complete content.

The rest of the work is routine: Checking for terminology and style inconsistencies, mechanical errors (tabs, line breaks, codes, etc. which should not be there).

If you use SDL Studio, however, you cannot import your TMX as source files. You must convert your TMX into XLF files first.

I recommend Goldpan Editor for that purpose.

Goldpan Editor is a free tool developed by Logrus Global (www.logrusglobal.com) and converts your TMX file into XLF format. Afterward, you can process your TMX file in SDL Studio and use Goldpan Editor later to convert it back into TMX format.

If you want to know more about TMX maintenance as a service, send me a mail to mail@kubus-translations.net.

I offer TMX maintenance and return a curated TMX file, which means better matches and fewer inconsistencies from the TMX in your next project, less processing time, and lower cost.

As an extra, I produce a glossary list of the terminology included in the TMX and deliver this glossary in XLSX or TBX format.

As Slator recently pointed out (see: https://slator.com/industry-news/lsps-sue-canada-translation-bureau-over-polluted-translation-memories/), there is an interesting class action suit ag.jpg?auto=format,compress&fit=max&) ainst the Canadian Translation Office, a Federal agency of the Canadian Government. Several translation suppliers claim that they have been „cheated“ by the forced use of „polluted“ translation memories provided by the client (i.e., the Canadian Translation Office).

ainst the Canadian Translation Office, a Federal agency of the Canadian Government. Several translation suppliers claim that they have been „cheated“ by the forced use of „polluted“ translation memories provided by the client (i.e., the Canadian Translation Office).

As a worker at the end of the translation chain, you may have had such cases on a smaller scale. You can then either ask and hope for a small compensation or turn down the next offer from that client.

Nevertheless, the question remains:

How do you check the quality of a TM as a translator or as a language services supplier?

I recently started to check the quality of (external) translation memories with the same tools as used for my translation projects.

I check TMX files for consistency, mechanical errors, and spelling in MemoQ or with the external Verifika checker tool. The latter is faster, adaptable, and delivers an error report.

The consistency report may give you an indication of how many people contributed to the translation memory, if it was the product of quick and dirty alignment, or show how your style and terminology changed over the years.

This approach falls short for two reasons:

This method does not find exact but wrong matches. If one sentence was translated the wrong way but entered the TM as a confirmed translation, it will stay there unnoticed until it is retrieved one day AND is placed in a context that makes clear that it is wrong.

· It is a desktop tool, and TMs can be big or very big. Most of my own TMs have between 2,000 and 8,000 segments, i.e., 15,000 to 80,000 words, which is still manageable on a desktop. However, my biggest TMX file is 540MB, including 610,000 sentences, and here I enter into trouble.

What about you: Do you check the quality of TMs, and if so, how?

Grab technical terminology from your German source texts. All you need is MS Office, the QA tool Verifika, your German text copied in XLF or TMX format, and the German Hunspell spellchecker. Works also for smaller texts of 30-80 pages and will be available within minutes.

Imagine your have an urgent translation project of 80 pages which is to be split among three translators. Would be nice if they can start with a verified glossary list instead of discussing terminology "on the fly" during translation.

How do you do it without keeping them waiting too long?

Here is the hack:

The idea is to use an unamended, virgin spellchecker to find unknown and therefore probably technical terms in your German text. All words which are not part of the general spellchecker corpus are good candidates for your terminology list.

Why do I advertise this for German language?

German language coins technical terminology with compound nouns, which are of course not part of the general spellchecker corpus. This means, your number of term candidates will be higher than in isolating languages, where technical terms are simply formed from nouns put next to each other. Compare German technical terms like "Sechszylindermotor" with their English equivalents "six piston engine", or "Tintendruckkopf" with "inkjet printer head", and you see the difference.

Whereas "six" and "piston" and "engine" are all part of the general text corpus, the German "Sechszylindermotor" is not and goes into our terminology list.

Contrary to statistical terminology mining, you need neither a big text corpus, nor a stopword list to reduce linguistical noise. In fact, your spellchecker is your stopword list.

This means, the better you train your spellchecker with technical terminology, the lower the number of term candidates will be!

I use Verifika as QA tool.

Verifika is a QA tool similar to Apsic XBench. It uses the Hunspell spellchecker and delivers a list of unknown words within minutes. This list includes your terminology candidates which you can pretranslate and resolve with your Translation Memory system.

If you need a more detailed instruction, send me message to mail@kubus-translations.net.

Now and then you may have to amend the language variant of a finalized translation file afterwards.

There may be various reasons for this, either a misunderstanding on your side, missing or wrong language codes in the client’s project files, or you do it deliberately to make use of a large Translation Memory which you own, but which has a different language variant.

In my case, I often have texts in “international English” marked as “EN-US”, but also “EN-NZ”, and EN-IE”, whereas most of my TMs are “EN-GB”.

Normally I put that right at the beginning, inside my translation project, but sometimes I forgot about it.

So, if you have a file coded as “EN-US”, but your client wants a file coded as “EN-GB”, what do you do?

Do you set up as new project with the correct language variant and re-translate?

Not necessary.

XLF files (and variants as SDLXLIFF, MXLIFF, etc) are nothing else than text files, you can amend the language variant in your completed translation file afterwards using Search & Replace in a plain text editor like WordPad.

Open the file with WordPad and look for strings like these:

source-language="en-US" target-language="de-DE"

Replace the entry “en-US” with “en-GB”, and you are done.

Use EM text editor for that purpose. (Don’t use MS Word for XLF editing, if you do not know what you are doing. It may spoil your format and leave you with an unusable file.)

Re-open the file in your CAT system to check.

In SDL Studio, just to look for the language icons in the lower right corner:

.jpg?auto=format,compress&fit=max&)

SDLXLIFF file with the language variant “en-US” (top) and “en-GB” (below).

If you want to know more about XLF tinkering, check my blog regularly.

I recently had a unique problem with a job in XLF (XLIFF) format.

It came as a partly translated bilingual English-German file in UTF-8 as encoding, which is normally good enough to maintain the German umlauts as ü,ä,ö and the ß.

You will find this info in the first line of your XLF file if you open it with a plain text editor:

<?xml version="1.0" encoding="UTF-8"?>

<xliff version="1.1">

<file source-language="en" target-language="de" datatype="plaintext" original="xxxx.xliff">

However, I found that this project file included a mixture of correct umlauts and umlauts converted into codes:

Die argentinischen Übersetzer sind Erben jener Tradition, die darin besteht, Br[uuml]cken zwischen den Kulturen zu schlagen. Dieses Geschenk wurde dem Land von den zahlreichen Immigranten gemacht, die aus allen Teilen der Welt kamen, ihre Kultur mit sich brachten und sich intensiv um die Erhaltung dieser Traditionen bem[uuml]hten.

Interestingly, the capital U-umlaut is displayed correctly, whereas the small u-umlaut is not. All other umlauts were also displayed correctly.

The most probable explanation for this behaviour is that somebody produced this German text on a “foreign” machine with different encoding (Russian, Spanish, Hindi, or whatever). Whereas all characters may display correctly in his text editor, and most ones also in an XLF editor, a few special characters may not.

The solution?

Save XLF in UTF-16 format to be on the safe side. If your editor does not suggest this format, you may save in UTF-8 first and later modify the first line in your XLF file:

<?xml version="1.0" encoding="UTF-16"?>

Since XLF files (and variants as SDLXLIFF, MXLIFF, etc) are nothing else than special text files, you can amend such errors as above using Search & Replace in a plain text editor like Wordpad.

Replace all entries of [uuml] with ü and you are done.

I recommend EM text editor for that purpose. It is free, it is fast, and it processes very large files as well.

MS Word may be down with a text file of 600+ pages, EM text editor does it within a minute.

(Don’t use MS Word for such XLF editing, if you do not know what you are doing. It may spoil your format and leave you with an unusable file.)

If you want to know more about XLF tinkering, check my blog regularly.

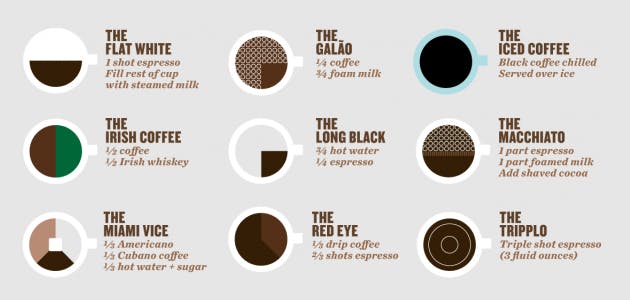

I recently found a nice and simple picture to explain the difference between words and terminology. In short, "coffee" is the beverage what you have in mind, and the terminology is what you read on the menu.

(The menu photo is taken from the http://www.copypanthers.com homepage.)

Problems use to develop if the author later falls back to "coffee" alone, without specifying if he refers to the Long Black, the Flat White, or the Macchiato...

In technology, your menu is usually a material data sheet, or a work standard. Just think about all the different nuts and bolts and shims.